Key Takeaways!

- Split testing (A/B or bucket testing) compares two versions of a webpage, ad, or app element to determine which performs better.

- Split or a and b testing improves conversion rates, reduces bounce rates, and optimizes ROI through data-driven insights.

- This method involves setting goals, testing variations, analyzing outcomes, and implementing winners.

- A/B testing vs multivariate testing differ significantly, yet many people believe they are the same.

What if a single word could double your results—or quietly ruin them? Most people never notice the tiny details that shape decisions: a headline that hooks (or doesn’t), a button that invites a click (or gets ignored), a call to action that either converts or disappears into the noise. It all seems small…until it isn’t. Instead of guessing what works, split testing reveals the truth using real user behavior to show you what actually drives results.

You don’t need to be a data expert to get started—just a curious mindset and a willingness to experiment. In this blog, we’ll explore what a b split testing is, how it works, why it’s so effective, the tools you can use, and the best ways to make the most of it.

Stop Guessing, Start Testing – Join 7SearchPPC!

What is Split Testing?

Split testing, also called A/B testing or bucket testing, is a method to compare two versions of something to see which one works better. It is widely applied in online advertising, website optimization, and app development.

In this approach, two versions—Version A and Version B—of a webpage, or advertisement, are created. These versions are then presented to different user segments simultaneously. Performance is measured using predefined metrics such as click-through rates, conversions, sign-ups, or sales.

By analyzing the collected data, businesses or marketers can identify which version delivers superior results.

For instance, an advertiser can create two versions of an ad—one with a catchy headline and another with a clear, informative message. These ads are shown to different segments of the target audience at the same time frame. By checking which ad gets more clicks or conversions, the advertiser can see which one works better and use it to improve results.

Benefits of Using Split Testing

Split a/b testing offers many benefits, and some of these include:

1. Bounce Rate Reduces Spontaneously

A high bounce rate signals that visitors aren’t engaging with your website or a landing page, which can negatively impact its performance and effectiveness. When users leave quickly, it indicates that your content, design, or user experience isn’t resonating with them. By creating two versions of a page and testing different elements such as headlines, call-to-action buttons, or layouts, you can determine which version keeps visitors engaged.

For example:

If Version A lowers the bounce rate while Version B increases it, Version A is the better-performing option. You should implement that version to keep visitors engaged for longer.

2. Data-Driven Adoption

Split or bucket testing gives you a clear, data-driven way to understand what actually works, rather than relying on guesswork or opinions. Instead of assuming what your audience prefers, you validate decisions with real insights.

Companies that consistently use A/B testing often see significant improvements. Studies have shown conversion rate increases ranging from 10% to over 30% when experiments are run and optimized systematically.

It also helps reduce risk. Rather than rolling out major changes blindly, you can test small versions, learn quickly, and scale only what proves effective. Over time, this creates a cycle of continuous improvement where every decision is backed by evidence, not instinct.

Almost 60% of organizations believe that A/B testing is extremely valuable for enhancing conversion rates. Source: TrueList

3. Conversion Rates Gradually Improve

Conversion rates are one of the most critical metrics businesses or advertisers track. When they start to decline, it’s a clear signal that something isn’t working—and that opportunities are being missed. This is where split testing becomes useful.

When you try different versions again and again, you learn what people respond to better. Even small changes can make a difference. Slowly, more visitors start taking action, like clicking or signing up. It’s not a sudden jump, but a steady improvement that grows over time and gives better results.

4. Higher ROI and Cost Savings

A and B testing helps you see what truly connects with your audience, so your budget goes toward ideas that perform well. You avoid spending on elements that don’t add value. Over time, this leads to better returns, smarter spending decisions, and marketing efforts that deliver more impact without increasing costs.

Also Read – Tips for Affiliates to Stay Profitable in 2026 Amid Global Uncertainty

How Split Testing Actually Works

Before you run split tests, it’s essential to understand their mechanism, as this helps you identify the most effective version. Here’s an overview of how split or a/b testing functions.

1. Define Your Goal

Split testing focuses on goals, meaning what you want to achieve. That’s why defining clear goals is important. Common goals include:

- Increasing website clicks or conversions

- Improving email open rates

- Boosting engagement on an app

- Optimizing online ad performance

Think in terms of measurable outcomes, e.g., “I want 10% more people to click the signup button.”

2. Pick What You Test

Focus on the elements that truly matter, since not everything can be tested through ab testing, it’s crucial to identify the key points that will make the biggest impact. This could be:

- Headlines or button text

- Images or videos

- Email subject lines

- Layouts or colors

Tip: Change only one version at a time so you know which one caused the difference.

3. Split Your Audience

After selecting what to test, you need to split the audience to assess various factors. Randomly divide your visitors or users into groups:

- One segment of the audience is exposed to Version A

- The remaining segment is exposed to Version B

This randomization ensures the results are fair and not biased by external factors.

4. Run the Test

Allow the test to run for a long time to obtain meaningful statistical data; rushing can yield misleading results. The appropriate testing duration depends on:

- How much traffic do you get?

- What effect are you looking for?

5. Analyze Results

You have to compare the performance of each version:

- Look at metrics like click-through rates, conversions, or revenue

- Determine which version performed better

- Define the key metrics for the specific versions you are testing. For example, if you are testing two versions of an ad, the metrics you should focus on are clicks, impressions, conversion rate, etc.

Statistical A/B testing tools help determine whether observed differences are statistically significant or simply due to chance, enabling you to accurately assess the reliability of your results.

6. Implement the Winner

After analyzing the results, you will identify the one that provides the outcomes you were aiming for. Once you have a clear winner,

- Make the winning version the main one and proceed with that.

- Use insights to guide future tests when they are successful.

Important: Split testing is an ongoing process, not a one-time solution. When analyzing results, it’s important to focus on the areas that matter most, as careful consideration of these insights is essential for continuous improvement.

Read Also – What Is FTD Tracking in Advertising? Definition, Benefits, and How It Works

Who Can Use Split Testing

Testing the a and b versions is a widely used method for comparing two versions to see which performs better. It’s not limited to big companies. Many different people and groups can use it to achieve their objectives. Here’s a clear overview of the typical users and their reasons for using it:

1. Marketers & Advertisers

They use split testing to compare:

- Ad creatives (images, headlines, CTAs)

- Email subject lines and content

- Landing pages

Goal: Increase conversions, clicks, and ROI.

2. Website Owners & Bloggers

They test:

- Page layouts

- Headlines

- Call-to-action buttons

Goal: Increase engagement, time on site, and sign-ups.

3. UX/UI Designers

Designers experiment with:

- Page layouts

- Button placements

- Navigation structures

Goal: Improve user experience and ease of use.

4. App Developers

Mobile and web app teams test:

- Onboarding flows

- Notifications

- Feature rollouts

Goal: Helps increase people retention and app usage.

5. Performance Marketers

Performance marketers take a broader, data-driven approach across the full funnel. They test:

- Funnel steps (landing page, checkout, upsell flow)

- Conversion events (click vs purchase optimization)

- Offers (discounts, bundles, free trials)

Goal: Enhance measurable results such as conversions, revenue, and customer lifetime value by relying on data instead of assumptions.

Read Also : Cookieless Advertising 2026: How Advertisers Can Prepare for the Post-Cookie Era

Split Testing Best Practices: Unlock Higher Conversions Every Time

Getting meaningful results from split testing isn’t just about running experiments; it’s about having a clear strategy behind them. When done right, split or a/b testing can unlock powerful insights. Here are some best practices when split testing.

Single-Element Testing

If you change too many things at once, you’ll never know what actually made the difference. That’s not split testing—that’s guesswork. Split testing is about clarity. It’s about identifying what’s working, what’s not, and why.

When you tweak multiple elements at once, like headlines, CTAs, buttons, and layouts, you muddy the results. Instead of insights, you end up with confusion, wasted time, and disappointing outcomes.

The better approach? Keep it simple. Test one element at a time and focus on what truly matters. This way, each result provides valuable insights, and every improvement is intentional rather than accidental. To achieve this, start by identifying the single most important element that you want to test, for example,

- Headline vs Headline

- Red Button vs Blue Button

- Short Form vs Long Form Content

Focus on High-Impact Areas

Focus on what truly moves the needle because not everything carries equal weight. The real impact comes from identifying and prioritizing the few elements that make the biggest difference. Prioritize pages and components that directly affect conversions, as small tweaks here can produce big gains.

These are high-impact areas to focus on to improve engagement or conversion rates.

- Landing pages

- Pricing pages

- Checkout flows

- Call-to-action (CTA) buttons

Don’t Stop Too Soon

Pausing an AB split test the moment you see early results is a common and costly mistake. Early performance is often driven by randomness rather than real insights. Acting on this “noise” can increase your chances of a false win by over 25%. The smartest approach? Be patient and let the data speak for itself.

Why You Should Let Tests Run Their Course:

- Collect meaningful data: Small sample sizes can be misleading. The longer your test runs, the more reliable your results become.

- Reach statistical significance: Before making any decisions, ensure that whatever you test has been 100% reliable. This ensures your results are not just due to chance.

- Avoid the temptation to peek: Checking results too frequently and stopping when things “look good” can lead to biased decisions and inaccurate conclusions.

- Ensure consistent performance: Run your test across different days and user behaviors to validate its real-world impact.

Rushing decisions based on early signals can cost you growth, while disciplined split testing leads to insights you can trust.

Follow the “Big Swing” vs. “Incremental” Strategy

If your conversion rate is below 2%, avoid spending time testing button colors, since they won’t impact your results unless you make significant changes. You need to implement extreme alterations.

- The Big Swing: Test a completely different page layout or a totally different offer (e.g., “Free Trial” vs. “Book a Demo”). Don’t just tweak button colors—rethink the entire experience. These kinds of changes go beyond surface-level design and tap into what truly motivates people.

- Incremental: Once you find a winning “Big Swing,” then you refine the headlines and images to squeeze out those extra 1–2% gains.

However, one of the most important elements that often gets overlooked is psychological triggers, the underlying factors that truly motivate users to take action. It’s not just about how things look, but also about how they make users feel and how they respond. Here are some key psychological triggers you should be testing:

Psychological Triggers to Test

| Trigger | Implementation Idea |

|---|---|

| Social Proof | Test “Join 5,000+ others” vs. “Trusted by industry experts.” |

| Urgency | Test “Offer ends at midnight” vs. “Limited stock available.” |

| Authority | Test adding a “Featured in [Magazine]” logo bar. |

Multivariate Testing Vs Split Testing: Key Differences Between the Two

Split testing, or A/B testing, is well-known and not a new concept. In contrast, multivariate testing is relatively new and can offer additional benefits. Let’s explore how these two testing methods differ.

But before we start, let’s take a look at what this concept actually refers to. We have seen what split testing is, but not multivariant testing, so let’s examine what it actually means.

What is Multivariate Testing?

Multivariate tests (MVT) are a method for testing multiple versions of different elements on your webpage, ads, or apps to determine which perform well and which need improvement. Rather than limiting insights to a single change, multivariate testing provides a deeper understanding of how various elements interact. This makes it a powerful testing method for improving user experience, increasing engagement, and driving conversions.

Multivariate Testing vs. Split Testing (A/B Testing)

While both multivariate testing and split or a/b testing are used to optimize performance, they differ significantly in scope, complexity, and the type of insights they provide.

| Factor | Split Testing | Multivariate Testing |

|---|---|---|

| Test Version | Test two versions (Type A vs Type B) | Tests multiple versions and combinations simultaneously |

| Complexity | Simple and easy to implement | More complex due to multiple versions and combinations |

| Traffic Needed | Requires relatively low traffic | Requires high traffic for statistically significant results |

| Speed | Faster results due to fewer versions | Slower, as multiple combinations are tested |

| Insight Type | Identifies which single version performs better | Identifies the best-performing combination of elements |

When to Use Multivariate Testing

- Multivariate testing is particularly useful when:

- You want to optimize multiple elements on a single page.

- You have sufficient website traffic to support complex experiments.

- You aim to understand how different elements interact.

- You are focused on fine-tuning user experience.

Top Split Testing Tools to Consider

There are many A/B testing tools available, and you can choose one to test your desired versions. We have listed some tools that can help you run A/B tests.

VWO

VWO is an all-in-one conversion optimization platform that you can use for A/B testing. It helps you identify areas that need improvement and uncover potential growth opportunities. Some benefits of using the VWO platform for split testing include:

- It uses Bayesian-powered SmartStats, designed for A/B testing, that provide faster, more intuitive, and actionable insights compared to traditional frequentist methods.

- Very marketer-friendly (minimal coding needed)

- Helps identify why a version performs better, offering every little detail that is beneficial to you.

Optimizely

Optimizely is a powerful, enterprise-level experimentation platform mainly used by large companies to test everything from website layouts to backend features. It is famous because of its detailed analysis.

Let’s take a look at the benefits this platform offers.

- You can test the entire product, not just page designs.

- Great for companies running continuous experimentation at scale.

- Using this platform for split testing is easy to understand, and it offers enhanced support for those new to the concept.

Adobe Target

Adobe Target—the name itself indicates why it is trusted and can offer better insights. Choosing this platform for A/B testing is a strategic move for businesses that need to scale personalization beyond simple web page variations. Let’s take a look at some of the advantages this platform offers when you choose it for split or a/b testing.

- It offers AI-Powered optimization: Use Adobe Sensei and Multi-armed Bandit testing to automatically allocate more traffic to the best-performing variation, maximizing results during the test.

- The platform provides an easy three-step guided process to set up and launch tests that determine what works best for your users.

Example of Split Testing for an Advertising Campaign

Below is a hypothetical ad campaign example based on a/b testing to help you better understand.

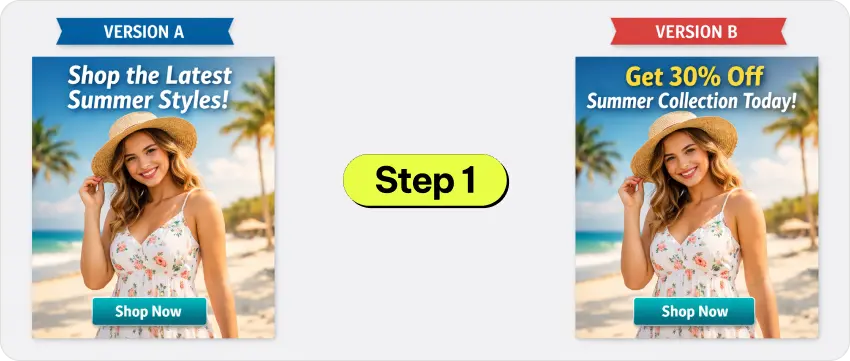

Scenario: An advertiser for a clothing brand wants to improve its online ad performance. It has decided to use A/B testing to achieve better results.

Goal: Increase Click-Through Rate (CTR)

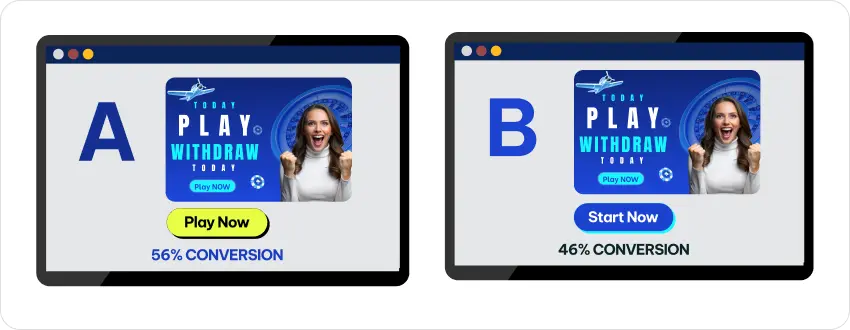

1. Creates Two Variations (A and B)

The advertiser creates two versions of one campaign, called Version A and Version B. The goal is to determine which headline drives more user engagement and conversions.

Both versions are visually identical, sharing the same call to action and layout, with only one element modified: the headline. The advertiser ensured that all other components remained unchanged to facilitate an accurate performance comparison.

Version A

- Headline: “Shop the Latest Summer Styles!”

- Image: Model wearing a summer outfit

- Call-to-action: Shop Now

Version B

- Headline: “Get 30% Off Summer Collection Today!”

- Image: Model wearing a summer outfit

- Call-to-action: Shop Now

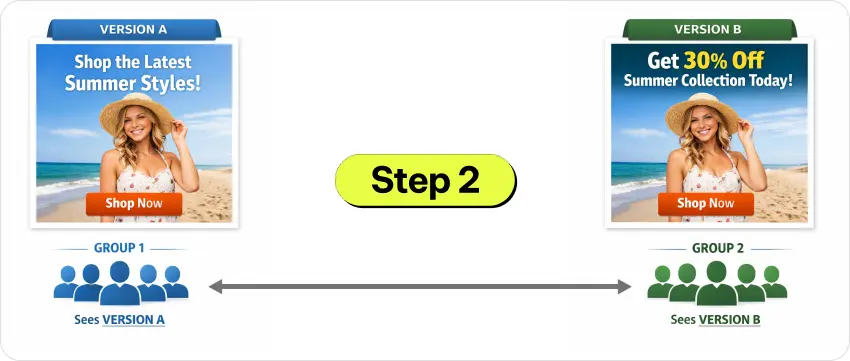

2. Splits the Audience

After creating both versions, the advertiser randomly divided the target audience into two equal groups.

- Group 1 sees Version A

- Group 2 sees Version B

This overall ensures each ad version reaches a similar audience for fair comparison.

3. Conducts the Test for Both Versions

The advertiser runs both ads over a fixed 7-day period, then evaluates their performance using key metrics such as Click-Through Rate (CTR), which measures how many users click the ads after viewing.

4. Analyzes the Results

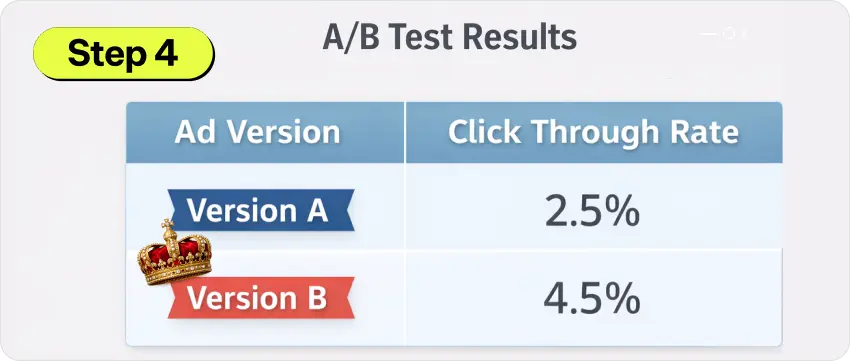

After obtaining the results from a/b testing, the advertiser analyzes them and finds that:

| Ad Version | Click Through Rate |

|---|---|

| Version A | 2.5% |

| Version B | 4.5% |

Winner: Version B has a higher CTR than Version A, making it the clear winner.

5. Optimizes and Scales

Once the advertiser identifies the winning version, the process does not stop, as further optimization continues for other ad campaigns.

- Scale Winning Ad: Once a winner emerges, the advertiser makes it the flagship ad with full confidence.

- Continue Testing: The advertiser keeps experimenting by swapping CTAs, tweaking colors, rotating images, and refining headlines to discover new opportunities.

Conclusion

When exploring split or a/b testing, it’s important to understand the concept clearly, as only then will you achieve better outcomes. We have learned the principles of split testing, how it works, who can use it, and the best practices for success. Finally, we have gained new insights into multivariate testing and how it differs from split testing.

Frequently Asked Questions (FAQs)

What is a/b testing?

Ans: A/B testing, also known as split testing and bucket testing, refers to an experimental method that involves testing two versions to identify the most effective one.

Why is split testing important?

Ans: Split testing helps businesses make data-driven decisions, reduce guesswork, and improve conversion rates. It helps overall in finding the best versions to achieve effective results.

What elements can be tested in A/B testing?

Ans: You can test headlines, CTAs, images, layouts, colors, email subject lines, and landing pages, as these elements are beneficial and should be tested during A/B testing.

What are common mistakes in split testing?

Ans: Common mistakes include testing multiple versions at once, stopping tests too early, and ignoring statistical significance.

What is the difference between split testing and A/B testing?

Ans: Split testing and A/B testing are essentially the same thing, as they both refer to the same concept.

What is multivariate testing?

Ans: Multivariate testing is a technique that simultaneously evaluates multiple versions and their combinations to determine the best-performing setup.